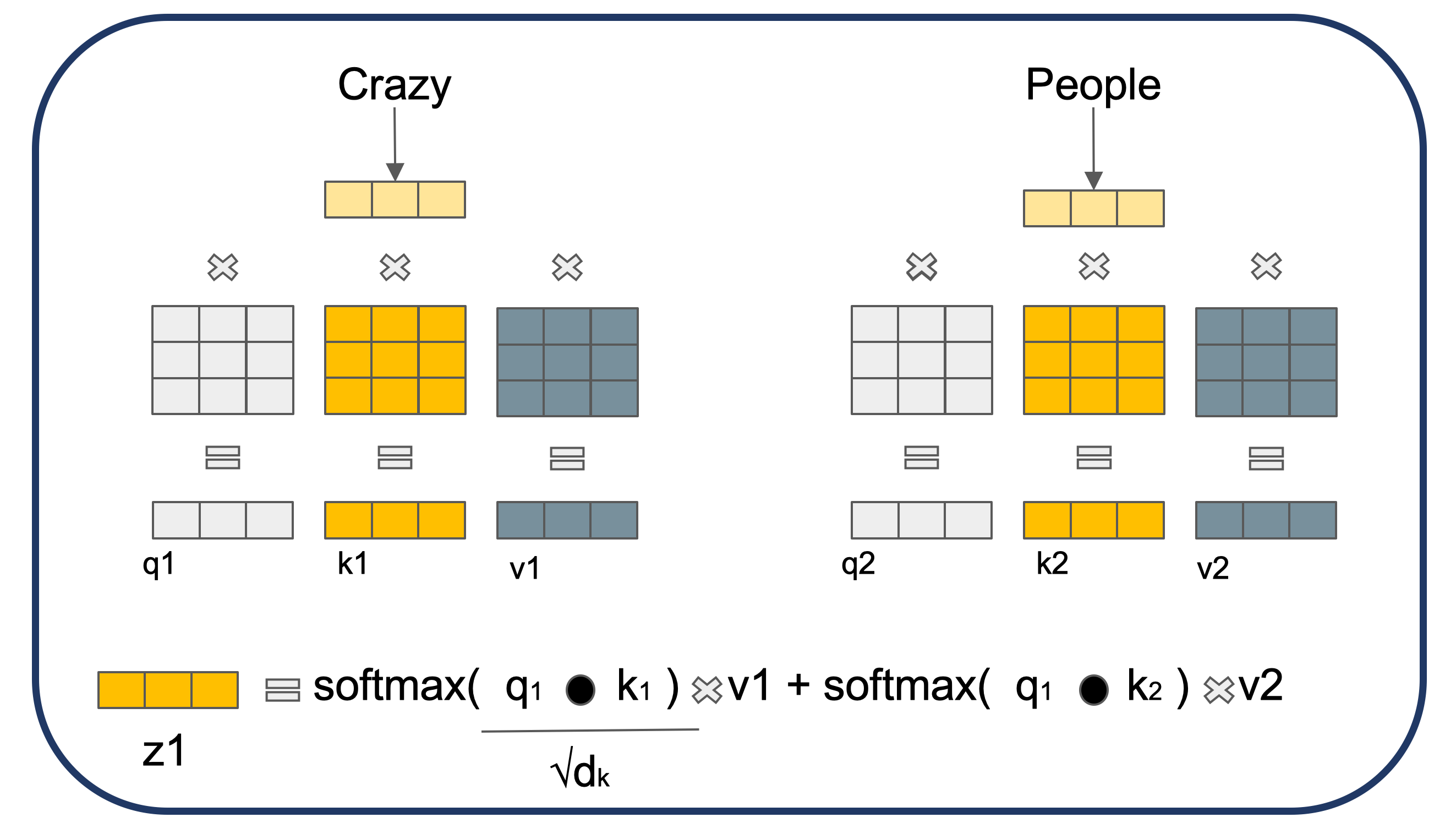

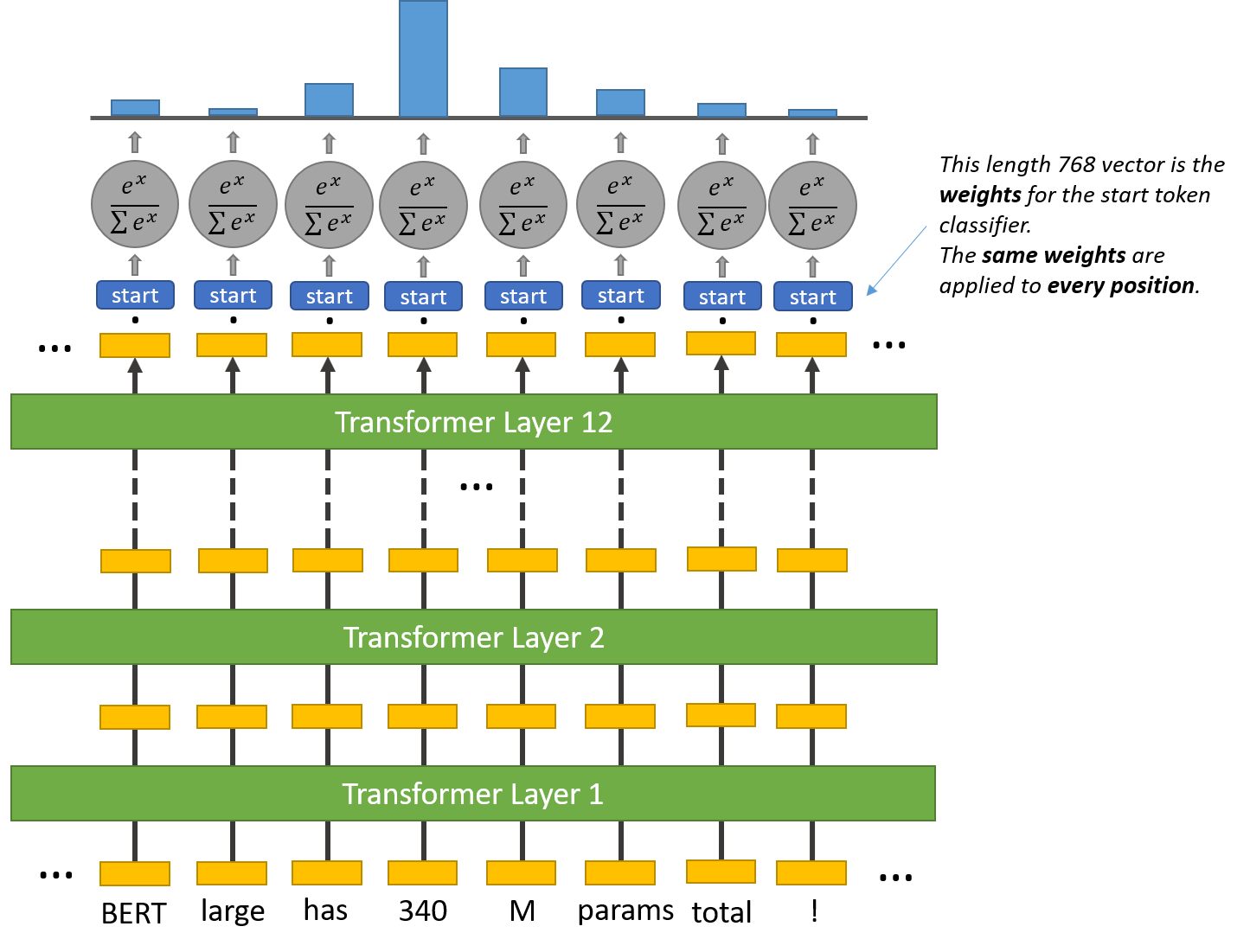

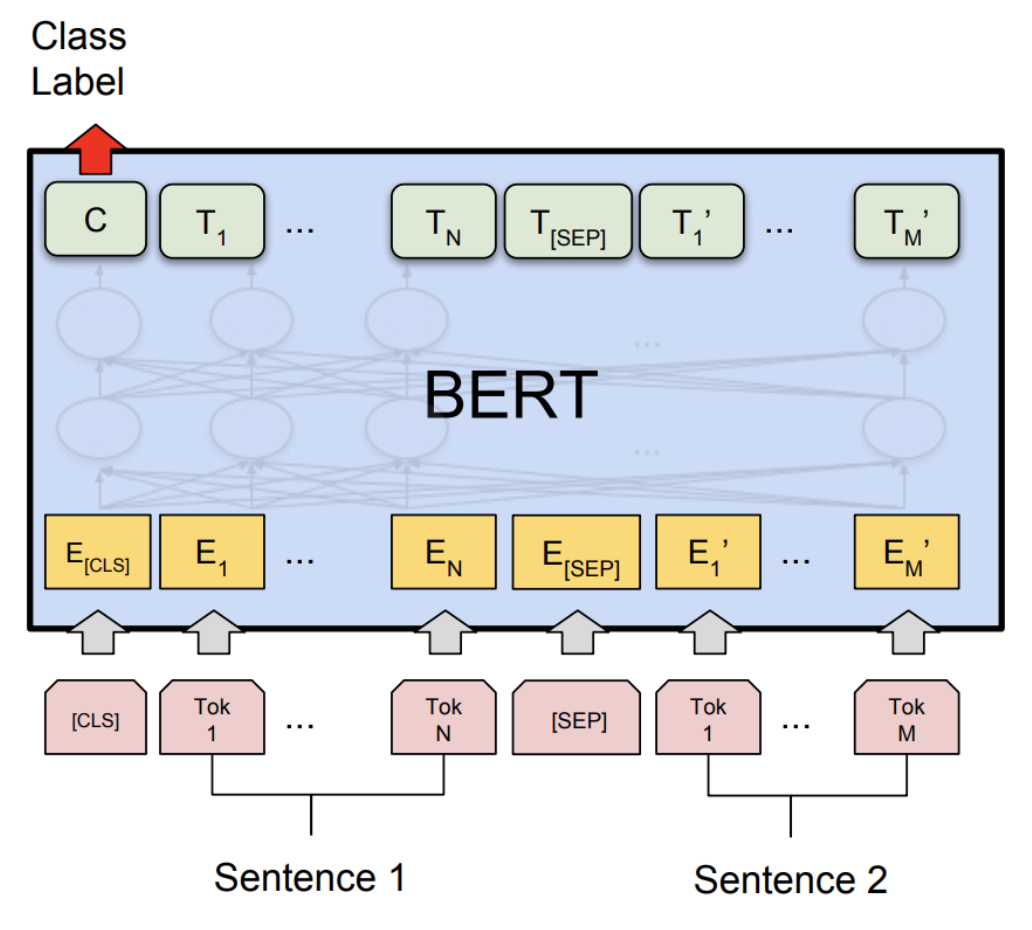

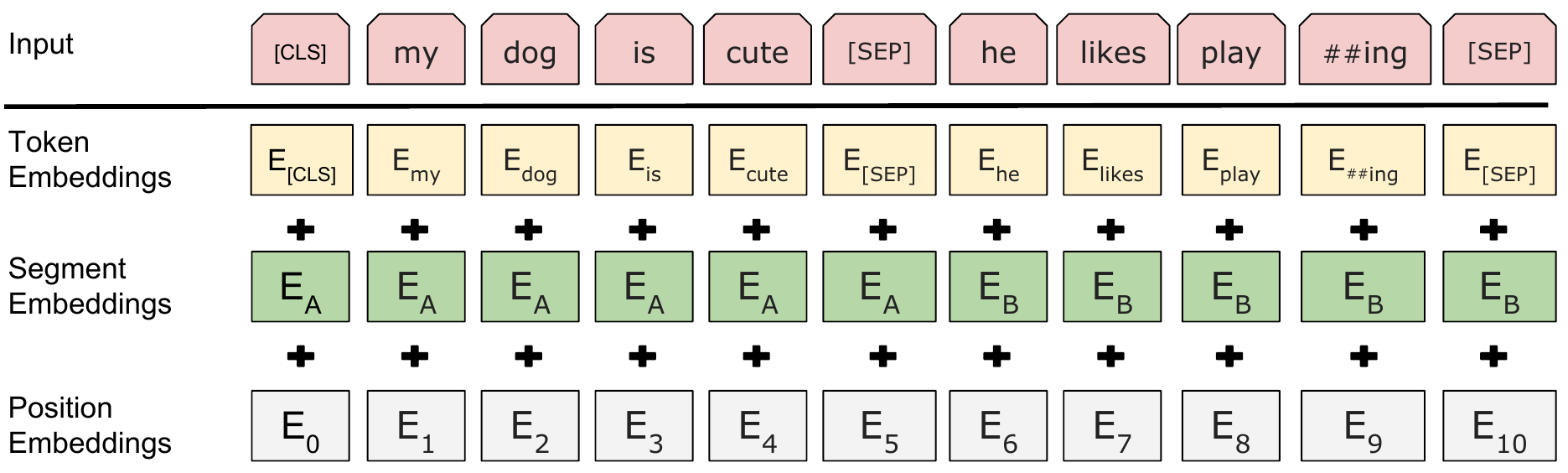

3: A visualisation of how inputs are passed through BERT with overlap... | Download Scientific Diagram

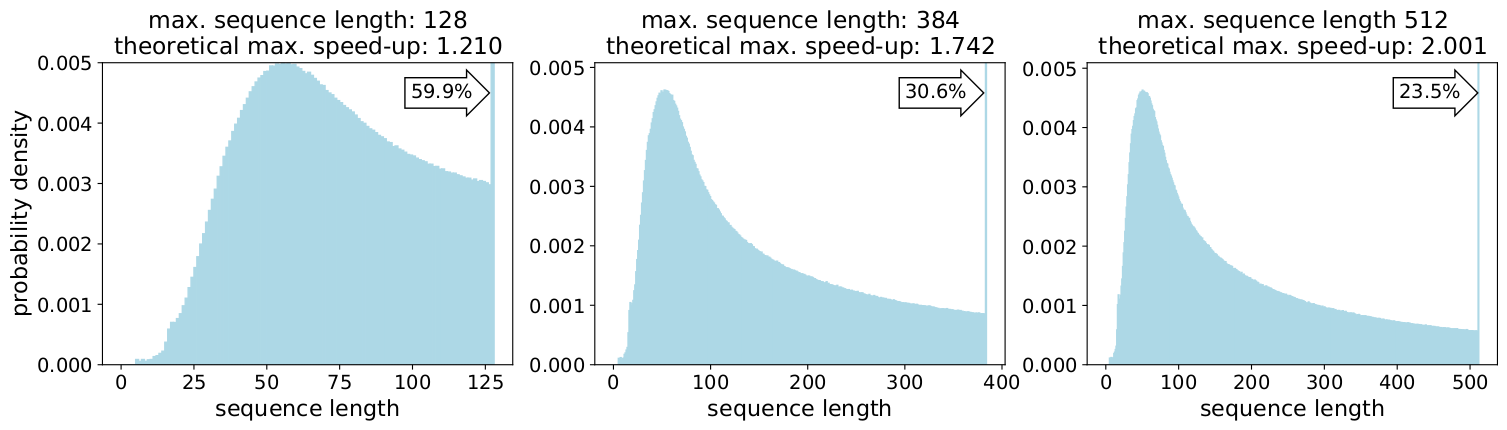

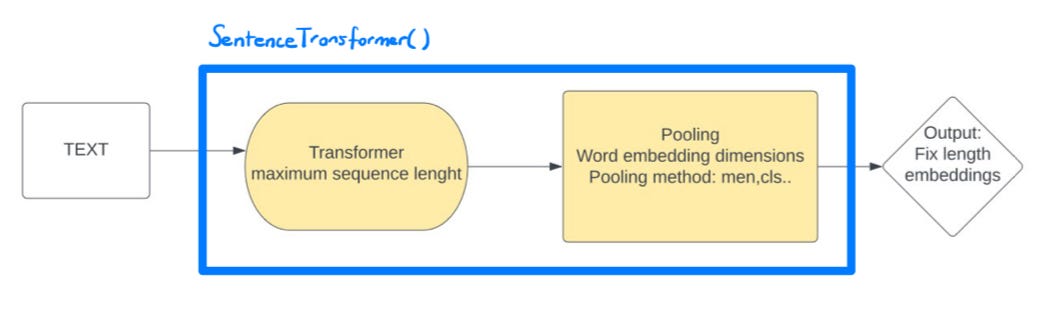

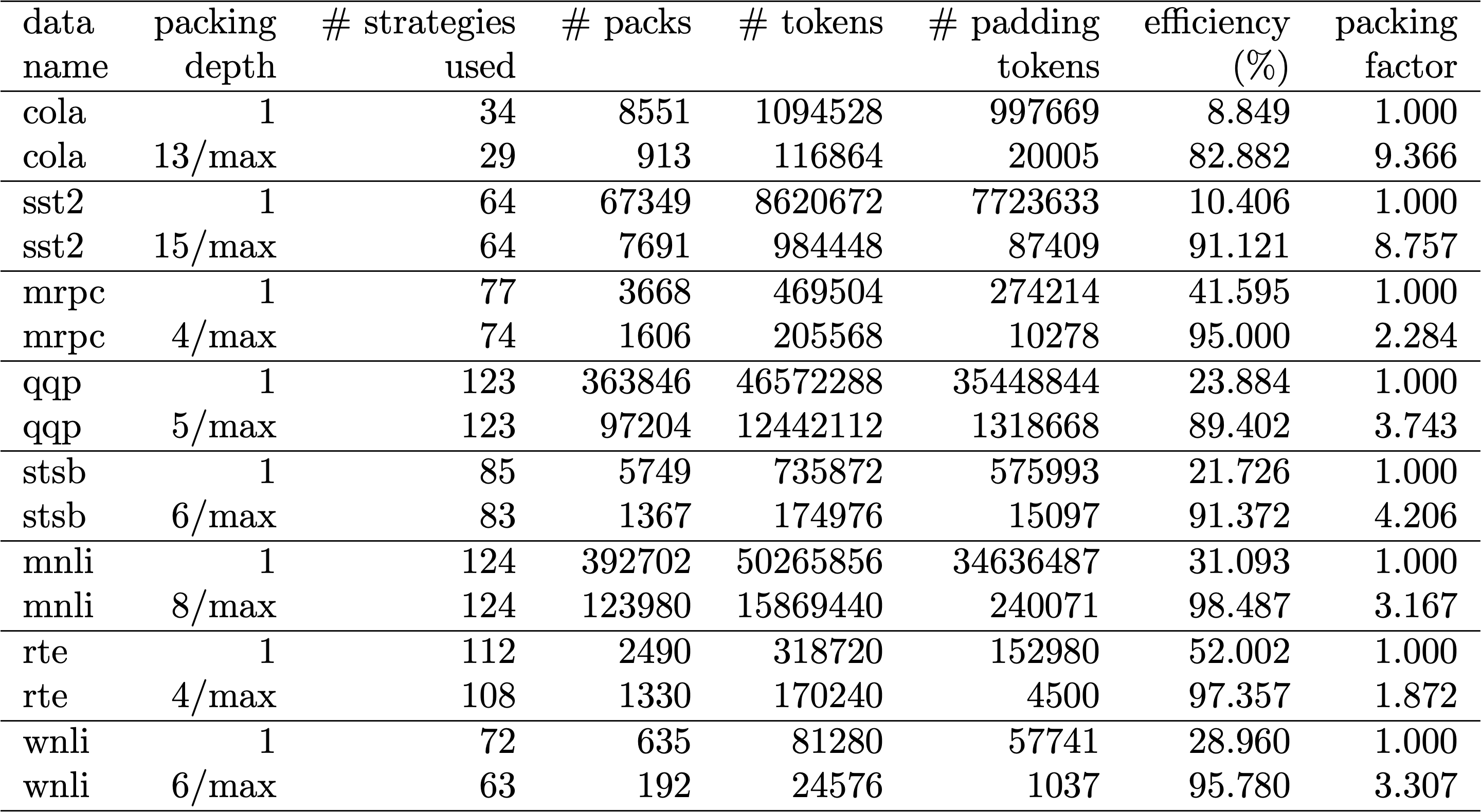

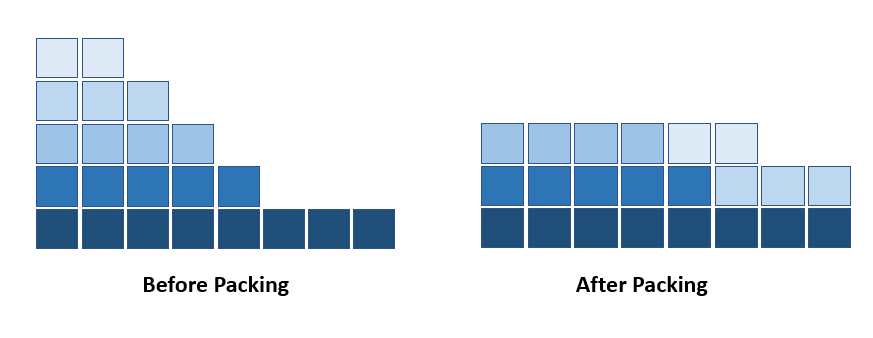

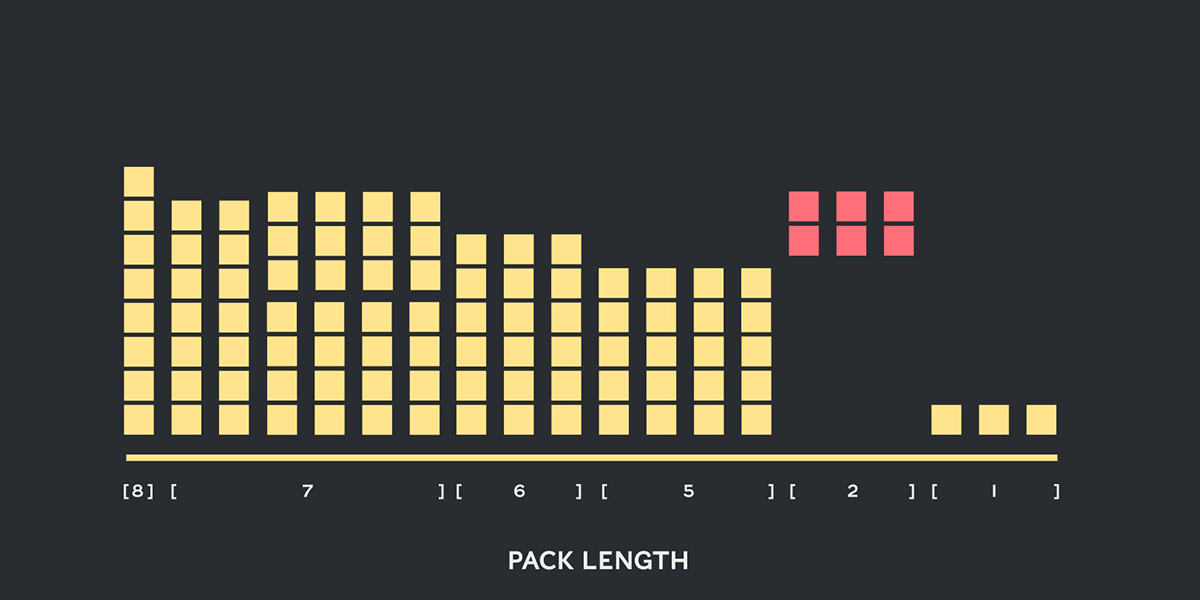

Introducing Packed BERT for 2x Training Speed-up in Natural Language Processing | by Dr. Mario Michael Krell | Towards Data Science

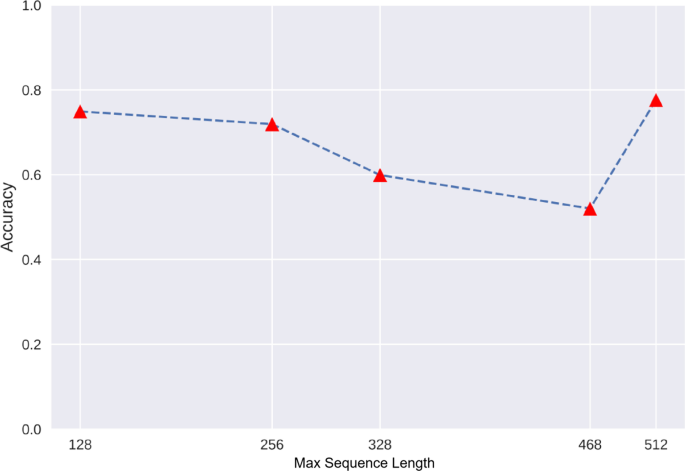

Results of BERT4TC-S with different sequence lengths on AGnews and DBPedia. | Download Scientific Diagram

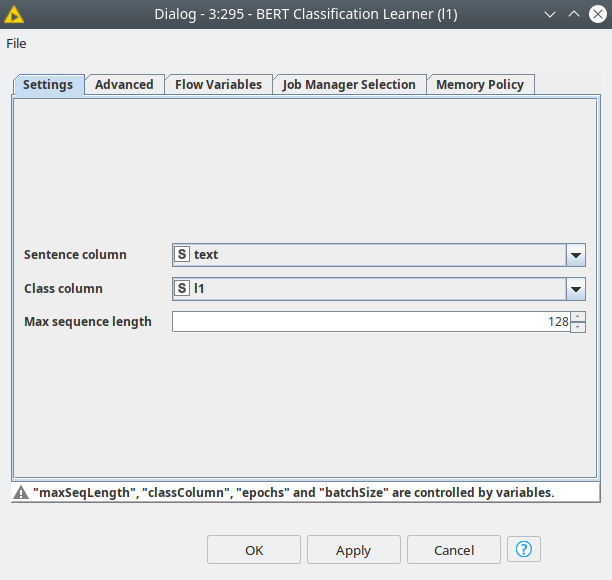

deep learning - Why do BERT classification do worse with longer sequence length? - Data Science Stack Exchange